LLM SEO: How to Optimize Content for ChatGPT, Claude, and Gemini Separately

ChatGPT, Claude, and Gemini each surface content differently. Here's the LLM-specific optimization playbook for B2B brands that want to get cited across all three.

How Visible Is Your Business in AI Search?

Find out in 60 seconds. Our free AI Visibility Checker scans ChatGPT, Perplexity, and Google AI Overviews for your brand.

Check Your AI Visibility

Platform-specific LLM SEO optimization signals for ChatGPT, Claude, and Gemini

One Content Strategy Won't Work for All Three

In early 2026, research from Superlines tracked the same brands across ChatGPT and Perplexity responses. The finding: a 14.8x sentiment gap between the two platforms for the same companies. One AI treated certain brands as credible authorities. The other treated them as minor players in the same category.

Same company. Same website. Same content. Radically different AI output.

This is the central problem with treating "LLM SEO" as a single discipline. ChatGPT, Claude, and Gemini each retrieve, evaluate, and synthesize content differently. A strategy built for one platform's retrieval logic won't automatically transfer to the others. The brands capturing citation share across all three major LLMs in 2026 are running platform-specific playbooks on top of a shared content foundation.

Here's how each platform works and what to do about it.

How LLMs Surface Content: The Shared Mechanism

Before the differences, the common ground. All three platforms use some variant of retrieval-augmented generation (RAG) when surfacing content-based answers.

The basic process:

User submits a query

The LLM retrieves a set of candidate documents from its index or live search

A quality and relevance scoring layer ranks those documents

The highest-scoring documents are fed into the generation context

The LLM synthesizes a response, citing selected sources

The shared signals that feed steps 2-4:

Content depth. Comprehensive, specific coverage of a topic outperforms surface-level treatment across all three platforms

Structured extractability. H2 sections with direct answers, FAQ sections, and tables are extracted more reliably than prose-heavy text

Source credibility. Documents cited by other authoritative sources score higher in retrieval quality assessments

Topical relevance. Content explicitly addressing the user's specific question rather than a general topic area

The platform-specific differences emerge in how each LLM weights these signals and what additional factors influence their retrieval logic.

Optimizing for ChatGPT

ChatGPT operates in two citation modes with importantly different optimization paths.

Training data mode is how the base ChatGPT model works without live web access. Content influences training-data responses by being present, well-represented, and authoritative in the web content that was crawled before the training cutoff. To influence this:

Build brand mentions across a wide range of authoritative web sources (industry publications, partner sites, review platforms, press mentions)

Ensure your brand is associated with specific category terms consistently across external sources

Prioritize quality over quantity of external mentions. A citation in a respected industry publication matters more than twenty directory listings

SearchGPT (live search mode) is how ChatGPT responds when web search is enabled. The retrieval logic here is more similar to Perplexity, but with a key difference: ChatGPT's live search has only about 14% overlap with Google's top-10 results. It draws from a broader source pool.

ChatGPT live search optimization:

Content depth is the dominant signal. Articles under 1,500 words rarely make the quality threshold

Entity clarity: consistent, specific brand positioning language that appears the same way across your site and external profiles

Long-tail question coverage: ChatGPT searches tend toward specific, research-oriented queries where comprehensive content wins

The ChatGPT content format: comprehensive, deeply sourced articles with clear H2 structure, specific data points with citations, and FAQ sections. The quality bar is higher than for Perplexity. ChatGPT filters aggressively for depth.

Optimizing for Claude

Anthropic's Claude uses a different quality weighting that responds to specific content characteristics.

Claude places high value on:

Structured reasoning content. Claude is trained to produce and recognize well-structured analytical reasoning. Content that walks through a problem systematically, presenting evidence, acknowledging counterarguments, drawing conclusions, aligns with how Claude processes information. Blog posts that state a position in the first sentence and spend the rest of the article restating it don't match Claude's quality model.

Clear factual claims with attribution. Claude responds strongly to content that makes specific, verifiable claims with named sources. "Research from Semrush (June 2025) found X" is more Claude-citable than "studies show X." The attribution specificity matters.

Citation-ready structure. Claude's retrieval (when using live web access) extracts content from well-structured documents efficiently. The same H2 structure and FAQ format that works for other platforms applies.

Honest scope limitations. Claude's training emphasizes epistemic accuracy, acknowledging what is and isn't known, what the limitations of a claim are. Content that presents nuanced, qualified positions tends to perform better with Claude's quality model than content that presents everything as definitive fact.

The Claude content format: analytical, well-structured articles with clear logical flow, specific sourced claims, acknowledged limitations, and transparent methodology where relevant. Thought leadership content with genuine analytical depth performs particularly well.

Optimizing for Gemini

Google's Gemini is deeply integrated with Google's existing search infrastructure, which creates specific optimization opportunities and requirements.

Google search signals carry over. Gemini has direct access to Google's index and draws heavily on Google's ranking signals. E-E-A-T, backlinks, domain authority, and featured snippet position all factor in. Optimizing for Google organic search has a more direct carry-over effect to Gemini citations than to ChatGPT or Claude.

Structured data emphasis. Gemini responds strongly to schema markup, particularly FAQPage schema, Article schema, and structured data that explicitly describes content type and organization. Google's investment in structured data as a search signal transfers directly to Gemini's retrieval logic.

E-E-A-T signals. Experience, Expertise, Authoritativeness, Trustworthiness. Google's quality framework directly influences Gemini citations. Named authors with demonstrable expertise, external citations of your content, and organizational credibility signals (Google Business Profile, consistent NAP data, authoritative backlinks) all matter.

Featured snippet optimization. Pages that hold Google featured snippets have high probability of being cited in Gemini responses for related queries. The content format that wins featured snippets, direct answer in the first sentence of a section, 40-60 words of precise text, is the right format for Gemini citation targeting.

Gemini content strategy: build the traditional SEO and E-E-A-T foundation first. Gemini sits on top of Google's quality signals. Author bios with credentials, external authoritative backlinks, FAQPage schema, and featured snippet targeting are the specific Gemini levers.

The Cross-Platform Content Stack

Platform-specific tactics layer on top of a shared content foundation that works across all three LLMs.

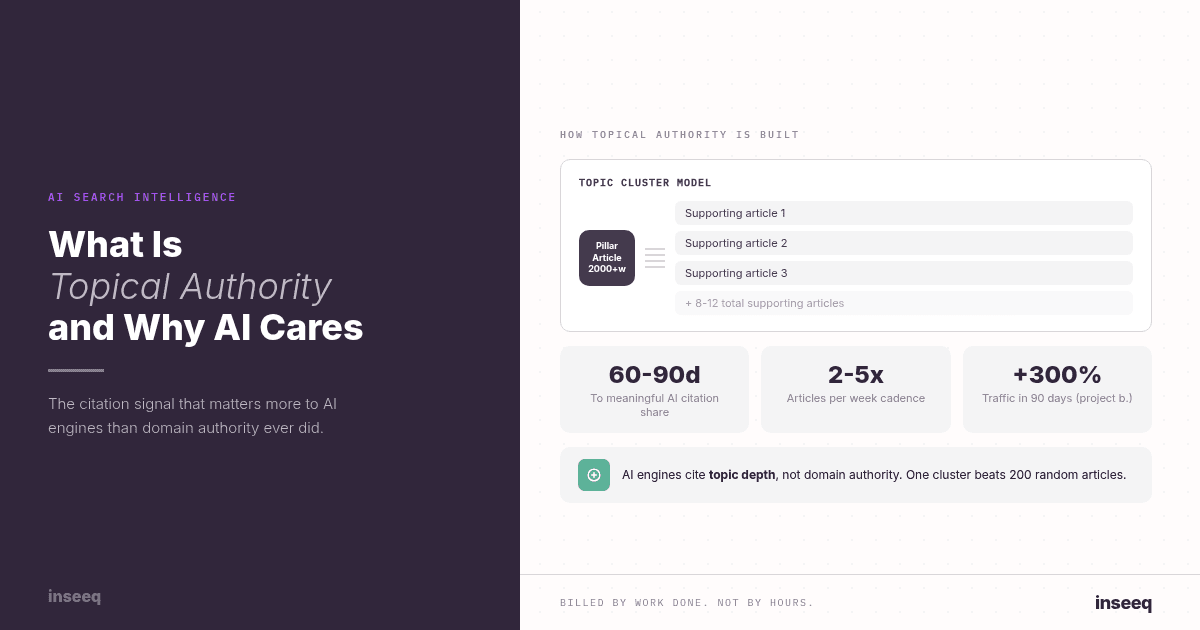

Topical authority clusters. All three platforms recognize domain-level topical authority. Building a pillar article plus 8-12 supporting articles per topic area is the foundation, regardless of which LLM you're optimizing for.

Direct-answer H2 structure. Content organized around specific questions, with direct answers in the first 40-60 words of each section, is extractable by ChatGPT, Claude, and Gemini. The format is universally effective.

FAQ sections with schema. FAQPage schema is effective across all three platforms. FAQ content directly maps onto how LLMs retrieve content to answer conversational queries.

Sourced, specific claims. "Research from [source] found [specific result]" is citable across all platforms. Vague assertions are not.

Consistent publishing cadence. Content freshness matters across all three platforms, particularly for Gemini (which inherits Perplexity's freshness weighting through Google's index) and for ChatGPT's live search. A cadence of 2-5 articles per week maintains freshness signals.

Signal | ChatGPT | Claude | Gemini |

|---|---|---|---|

Content depth | High | High | High |

External brand mentions | Very High | Medium | High (E-E-A-T) |

Schema markup | Medium | Medium | High |

Google ranking correlation | Low (14%) | Low | High |

Structured reasoning | Medium | Very High | Medium |

Freshness | Medium (live search) | Medium | High |

How inseeq Approaches Multi-LLM Visibility

inseeq's GEO strategy is built for cross-platform citation, not optimized for one LLM at the expense of the others.

The content layer addresses the shared foundation: topical authority clusters, direct-answer structure, FAQ sections with schema, consistent publishing cadence, and sourced specific claims. This universal layer drives citability across ChatGPT, Claude, and Gemini simultaneously.

The technical layer, Organization schema, Article schema, FAQPage schema, BreadcrumbList, covers the structured data requirements that Gemini specifically weights and that all platforms use for content parsing.

The off-site layer, third-party brand mentions, industry publication coverage, authoritative external citations, builds the entity recognition in ChatGPT's training-data model and the E-E-A-T signals that Gemini draws on.

The result is visibility that compounds across all three major LLMs rather than optimizing a single channel. As each platform's market share shifts, cross-platform topical authority is the investment that stays relevant.

Related reading: What Is Topical Authority and Why AI Search Cares About It More Than Google Did and How to Get Cited by ChatGPT, Perplexity, and Google AIO: What's Different for Each.

Frequently Asked Questions

What is LLM SEO? LLM SEO (also called GEO or AEO) is the practice of optimizing content to be cited by large language model systems like ChatGPT, Claude, and Gemini when they generate responses. It differs from traditional SEO in targeting citation behavior rather than ranking position. Core techniques include topical authority building, direct-answer content structure, schema markup, and consistent brand mention development.

How do I optimize content for LLMs? Start with the shared foundation: topical authority clusters, H2 sections with direct answers, FAQ sections with FAQPage schema, and sourced specific claims. Then layer in platform-specific tactics: brand mentions and entity presence for ChatGPT, structured analytical content for Claude, and E-E-A-T and featured snippet optimization for Gemini.

Is LLM SEO different from regular SEO? Significantly. Traditional SEO optimizes for ranking position on Google based on backlinks and keyword relevance. LLM SEO optimizes for citation in AI-generated responses based on topical authority, content extractability, and entity recognition. The two disciplines reinforce each other. Strong SEO helps Gemini and Perplexity citation. But LLM SEO requires specific additional techniques.

How do I get cited by multiple AI platforms? Build the shared content foundation first: comprehensive topical authority clusters, direct-answer structure, FAQ schema, and consistent publishing. Then add platform-specific layers: external brand mentions for ChatGPT, analytical depth for Claude, and SEO/E-E-A-T for Gemini. The shared foundation does 80% of the work.

What content format do LLMs prefer? H2 sections with direct answers in the first sentence, FAQ sections with FAQPage schema, comparison tables for multi-option information, and sourced specific claims with attribution. Content depth (1,500-2,500 words per article) is required across all platforms. Thin content is reliably filtered out.

See Your LLM Citation Score

Before you optimize, you need to know where you currently stand across ChatGPT, Claude, and Gemini.

inseeq's free Growth Audit maps your current citation share across major AI platforms, identifies which competitor is being cited instead of you, and produces a prioritized LLM optimization roadmap.

Get your free Growth Audit and find out how you score across every LLM that matters.

Hans-Peter Frank

Co-founder

How Visible Is Your Business in AI Search?

Find out in 60 seconds. Our free AI Visibility Checker scans ChatGPT, Perplexity, and Google AI Overviews for your brand.

Check Your AI Visibility