Anthropic Accidentally Taught Marketing Leaders How to Run AI Agents Better

The Claude Code source leak revealed how top AI agents actually work. Here's what marketing leaders can steal from it to run their AI agents better.

How Visible Is Your Business in AI Search?

Find out in 60 seconds. Our free AI Visibility Checker scans ChatGPT, Perplexity, and Google AI Overviews for your brand.

Check Your AI Visibility

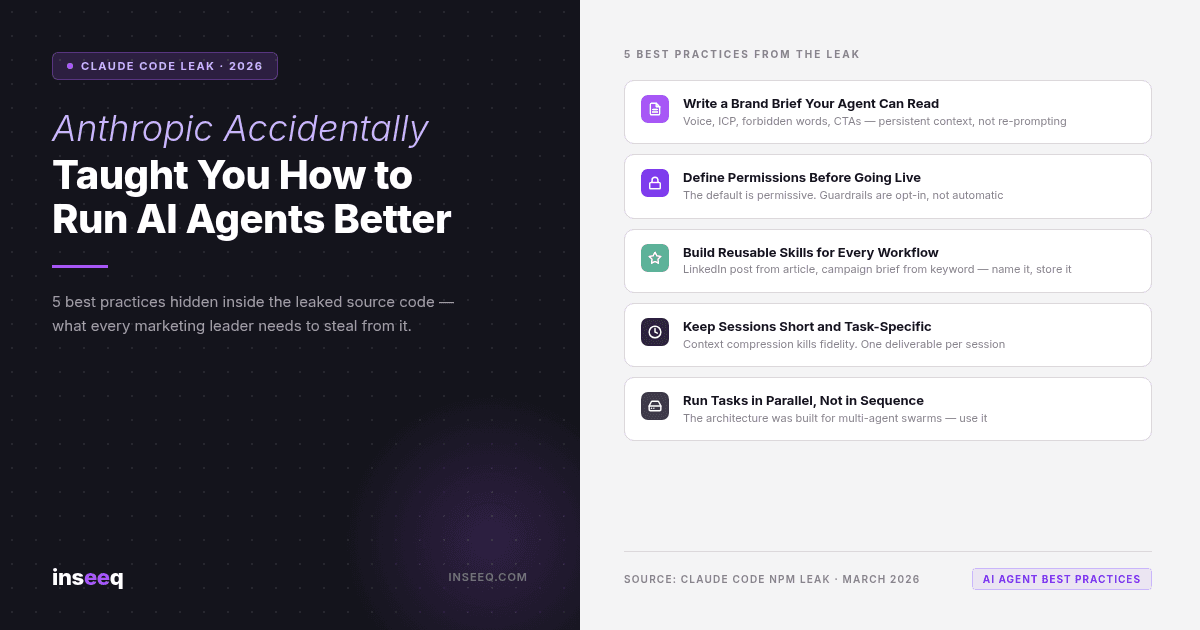

5 AI agent marketing best practices revealed by the Claude Code source leak — checklist graphic by inseeq

The Leak Nobody in Marketing Is Talking About (But Should Be)

On March 31, 2026, Anthropic accidentally shipped the entire source code of Claude Code to the public npm registry via an unobfuscated source map file. No hack required. Anyone who knew where to look could download 512,000 lines of internal TypeScript, complete with TODO comments, feature flags, and the full architecture of one of the most advanced AI coding agents on the market.

Developers noticed within hours. The story blew up on Reddit and X. Security researchers archived everything before Anthropic could respond.

Marketing leaders mostly ignored it.

That's a mistake.

Because buried inside that leak is something more useful than any AI marketing guide published in the past year: a blueprint of how production-grade AI agents actually work. Not how vendors say they work. Not the polished demo version. The real internals.

And once you understand those internals, you'll never set up an AI agent the same way again.

What the Source Code Actually Reveals About How AI Agents Work

Most marketing leaders use Claude like a very fast intern: type a prompt, read the output, repeat. The leaked source code shows that the system underneath is built for something far more sophisticated.

Here's what the architecture actually contains, translated from engineer to marketer.

Memory is deliberate, not automatic. The codebase includes a full memdir/ directory and an extractMemories service that continuously pulls facts from conversations and stores them for future sessions. This is not passive. The agent is making judgment calls about what to remember. If you've never explicitly told your AI agent what matters about your brand, it's been making those decisions for you. Every session.

Permissions are the invisible guardrails. There's a complete permission layer governing every tool call the agent can make. By default, Claude Code is permissive. It will do what you ask unless you've constrained it. For marketing teams with agents connected to live systems — CRMs, email platforms, social schedulers — this matters. The source code makes clear that guardrails are opt-in, not default.

Context compression is real and it costs you fidelity. The compact/ module automatically compresses long conversations when the context window fills up. This is why AI outputs tend to get blander and more generic as a session progresses. The system is summarizing its own memory to fit within limits, and summarization always loses nuance. The agent that wrote your brand-perfect intro paragraph at the start of a session is operating with less information by the end.

Skills are named, reusable workflows. The skills/ directory in the source shows that Claude Code treats repeatable tasks as first-class objects. You define a workflow once, name it, and invoke it without re-prompting. If you're currently copy-pasting the same instructions into every new session, you're doing this by hand what the underlying architecture was designed to automate.

Multi-agent coordination is the intended model. The coordinator/ module and TeamCreateTool reveal a system built for parallel agent swarms, not linear conversation. One agent can research while another drafts while a third reviews. Sequential use is the slow path. The architecture was designed for parallelism.

Five Best Practices Stolen Directly From the Leak

The leak doesn't just reveal how the system works. It reveals how to use it better. Here are five practices derived directly from the source architecture.

1. Write a brand brief your agent can actually read.

The leaked code references CLAUDE.md throughout, a special file that Claude Code loads at the start of every session to establish operating context. Think of it as onboarding documentation for a new hire who forgets everything between shifts.

Your CLAUDE.md equivalent should include: your brand voice (with examples of what good and bad looks like), your target audience and their pain points, your approved CTAs and how to use them, language the agent should never use, and any compliance constraints. This isn't optional. Without it, the agent is guessing your preferences from scratch every single session.

2. Define permissions before you connect anything live.

If your AI agent has access to your CRM, your email platform, or your social scheduler, you need to know exactly what it's allowed to do. The leaked permission architecture makes clear that "connected" and "constrained" are two different things. Before you run your first automated campaign, map out every system the agent can touch and define what actions require human approval.

3. Build skills for your repeatable workflows.

If you write a LinkedIn post from every article you publish, that's a skill. If you generate a campaign brief from a keyword and an ICP, that's a skill. If you create a weekly email digest from your content calendar, that's a skill. Name them, document them, and store them somewhere the agent can load them without you re-explaining the process. This is how you get consistent output instead of output that varies based on how you're feeling when you write the prompt.

4. Keep sessions short and task-specific.

Context compression is not a bug. It's an architectural necessity. But it means long sessions produce worse output than short, focused ones. The best AI agent workflows look like a series of short, purposeful sprints rather than one marathon session. One session per deliverable. Clear start, clear end, clear output.

5. Run tasks in parallel, not in sequence.

The multi-agent architecture in the leak is designed for teams of agents working simultaneously. Apply that logic to your own setup. Research and brief creation don't need to happen sequentially. Drafting and image sourcing don't need to happen sequentially. Map your marketing workflows and identify where parallelism is possible. You'll cut production time significantly without adding headcount.

Why Context Is the New Creative Brief

The CLAUDE.md concept from the leak points to a deeper truth about working with AI agents at scale.

Every marketing team has a creative brief process. Before any agency, freelancer, or new employee touches a campaign, you hand them the brief: who the audience is, what the brand sounds like, what we're trying to achieve, what we've tried before, what's off limits. The brief is the context. Without it, everyone starts from zero and you spend half the project correcting misalignment.

AI agents need exactly the same thing. The difference is that they can't read between the lines, infer from experience, or ask clarifying questions the way a human can. They work literally from what they're given. Which means the brief needs to be more complete, not less.

This is where most marketing AI setups break down. The context is either missing entirely, scattered across different documents, or rebuilt from scratch every session because nobody thought to persist it.

inseeq was built specifically around this problem. The platform treats brand context, audience definitions, voice guidelines, and editorial rules as first-class objects that live in a structured context layer, not in someone's Google Drive or copy-pasted into every prompt. When an inseeq agent writes an article, generates a social post, or plans a content calendar, it loads that context automatically from a centralized, versioned store.

The result is what the Claude Code leak's architecture was designed to produce: consistent, brand-correct output across sessions, agents, and time. No re-briefing. No drift. No outputs that read like they came from a different team.

That's not a product pitch. It's the logical conclusion of what the leak itself reveals: the context layer is the most important thing in an AI agent setup, and almost nobody is managing it deliberately.

What Happens When You Don't Set Context Properly

The consequences of skipping context setup are predictable, and they compound over time.

Off-brand copy compounds fast. An agent without a brand brief will write content that's technically correct and tonally wrong. At low volume, this is a minor annoyance. At the publishing cadence most AI-assisted teams are targeting (3-5 pieces per week), it becomes a brand consistency crisis within a month.

Session drift is invisible until it isn't. Each session without persistent context starts fresh. The agent doesn't remember that you hate corporate buzzwords, that your ICP is a technical founder not an enterprise CMO, or that you never use em dashes. You'll catch it on the output. By then you've already spent time on revision that good context setup would have prevented.

Hallucination risk scales with complexity. When an agent doesn't have reliable context about your products, pricing, and past results, it fills the gap with plausible-sounding content. The more autonomous your setup, the further that fabricated content can travel before a human sees it.

Parallel agents without shared context produce incoherent outputs. The multi-agent architecture from the leak is only useful if all agents draw from the same context source. If your research agent and your drafting agent have different versions of your brand voice, the output will read like it was written by two different people. Because it was.

The companies getting real results from AI-assisted marketing — not demo results, but sustained week-over-week output that actually converts — have solved the context problem first. The Claude Code leak just happened to show, in 512,000 lines of TypeScript, exactly why that's true.

Frequently Asked Questions

What is the Claude Code source leak?

On March 31, 2026, Anthropic accidentally included an unobfuscated source map in their Claude Code npm package. This made the full TypeScript source code of Claude Code publicly accessible via Anthropic's R2 storage bucket without any authentication. The repository at github.com/instructkr/claude-code mirrors the exposed snapshot for security research purposes.

What is a CLAUDE.md file and should marketing teams use one?

CLAUDE.md is a configuration file that Claude Code loads at the start of every session to establish operating context, similar to an onboarding document for the agent. Marketing teams using any AI agent should maintain an equivalent document covering brand voice, audience definitions, approved messaging, and forbidden language. Without it, the agent infers context from scratch every session.

Does the leak expose any data about companies that use Claude? No. The leak exposed source code about how Claude Code works, not customer data or conversation logs. The security concern is that competitors and researchers can now reverse-engineer Anthropic's architectural decisions and upcoming features (visible via feature flags in the code).

How does context compression affect AI marketing output? When a conversation session runs long, Claude's context compression module automatically summarizes earlier exchanges to fit within token limits. This means the agent progressively loses nuance from the start of the session. Practically: shorter, focused sessions produce more consistent output than marathon sessions.

What's the difference between running one AI agent and running multiple in parallel? A single agent works sequentially: it finishes one task before starting the next. Multi-agent setups run tasks simultaneously using a coordinator that distributes work across specialized agents. For marketing, this means research, drafting, and image sourcing can happen at the same time rather than in sequence, cutting production time significantly.

Is it safe to use Claude for marketing after this leak? The leak was an operational security failure, not a model compromise. Claude's underlying capabilities and safety systems were not affected. The main practical consideration for marketing teams is vendor reliability: Anthropic had two major exposure incidents within one week (the Claude Code leak and a CMS misconfiguration exposing internal model documents). Teams should review what data passes through Claude integrations and ensure their vendor contracts include breach notification clauses.

How can inseeq help with AI agent context management? inseeq manages the context layer that AI agents need to produce consistent, brand-correct output. Brand voice, audience profiles, editorial rules, and content strategy are stored in a structured, versioned system that agents load automatically at the start of every session. This eliminates the re-briefing problem and prevents brand drift across sessions and agents.

Get Your AI Agent Setup Right From the Start

The Claude Code leak handed marketing leaders a rare gift: a look inside how production AI agents are actually architected. The teams that pay attention will build AI marketing setups that produce consistent results. The teams that don't will keep getting output that drifts, varies, and requires constant human correction.

Context is the foundation. Everything else builds on it.

If you want to see how inseeq structures the context layer for AI-assisted marketing, and what that looks like in practice for B2B teams, book a free Growth Audit at inseeq.com/contact. No pitch deck. We look at your current setup, identify the gaps, and show you specifically what needs to change.

Hans-Peter Frank

Co-founder

How Visible Is Your Business in AI Search?

Find out in 60 seconds. Our free AI Visibility Checker scans ChatGPT, Perplexity, and Google AI Overviews for your brand.

Check Your AI Visibility